Space Data Core: Serving Earth AI | $SPACEDC

$SPACEDC

https://pump.fun/coin/D6keu7Qs75XL8mffUR1vDhPgUy87YT8TKAYJNSoDpump

DER ORBITALE IMPERATIV: The Thermodynamic and Economic Necessity of the Extraterrestrial Data Infrastructure

The Entropy Boundary

It is a fundamental axiom of thermodynamics that information processing is a physical act. It is not, as the layperson believes, a cloud-like abstraction that exists in a pristine, ethereal realm. To compute is to manipulate the physical state of matter—to shuttle electrons across a band gap, to flip magnetic domains, to charge capacitors. This process is inherently dissipative. According to Landauer’s Principle, the erasure of information—the resetting of a bit—carries a mandatory thermodynamic cost, a minimum amount of heat that must be released into the environment.

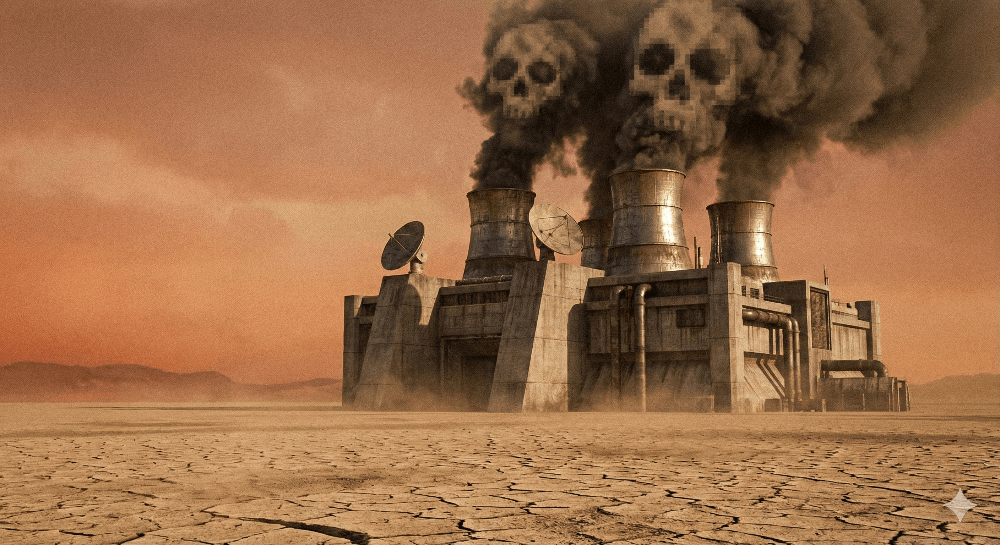

For the last seventy years, humanity has been content to release this heat into the biosphere of its home planet. We have built our "clouds" on the ground, burning fossilized carbon to generate electricity, pushing that electricity through resistive silicon, and then burning yet more energy to pump water to cool the silicon. It is a cycle of grotesque inefficiency. We are essentially heating our own habitat to perform logic.

As a scientist observing the trajectory of the species, I must state with absolute clarity: the current paradigm is a terminal dead end. We are approaching the Planetary Entropy Barrier. The exponential growth of Artificial Intelligence (AI), with its insatiable demand for floating-point operations (FLOPs), is colliding with the finite thermal and hydrological capacity of the terrestrial surface.

This report is not a speculative fiction. It is a rigorous engineering analysis of the only viable solution: the migration of the planetary computational substrate to Earth Orbit. We will dissect the physics of radiative cooling in the vacuum, the surprising resilience of commercial silicon to cosmic rays, and the emerging geopolitical reality of "Data Havens" in the sky. We will analyze the recent capital flows, such as the significant seed funding secured by SpaceComputer 1, which signals the market's awakening to this inevitability.

The thesis is simple: A civilization that wishes to expand its mind (compute) without destroying its body (biosphere) must elevate its brain to the cold, sterile, and infinite heat sink of the void.

The Terrestrial Failure Mode: A Calculus of Inefficiency

To understand the necessity of the orbital data center (ODC), one must first ruthlessly audit the failures of the terrestrial data center. The modern data center is an engineering compromise, optimized for an era of low-density internet traffic, not for the gigawatt-scale thermal loads of the AI era.

The Hydrological Hemorrhage

The most immediate bottleneck is not electricity, but water. High-performance computing chips, such as the NVIDIA H100, have pushed thermal design power (TDP) densities to levels where air cooling is no longer sufficient. We have turned to liquid cooling and evaporative cooling towers.

The mechanism is simple thermodynamics: water has a high latent heat of vaporization. By evaporating water, we reject heat into the atmosphere. However, this consumes the water. It is "Scope 1" consumption—the water is lost to the local watershed.

The statistics are staggering. Research indicates that training a single large language model, such as GPT-3, results in the direct evaporation of approximately 700,000 liters of freshwater.2 This is merely the training phase. The inference phase—the daily use of the model by millions of users—consumes exponentially more.

In 2023, data centers in the United States alone consumed an estimated 66 billion liters of water.2 This consumption is concentrated in specific "availability zones"—Northern Virginia, Arizona, Silicon Valley—regions that are often already facing hydrological stress. We are witnessing a collision between the digital economy and the biological economy. In a drought-stricken future, who gets the water? The server farm training the AI, or the farm growing the wheat?

In orbit, water consumption is zero. The cooling loop is closed. Heat is not rejected by evaporation; it is rejected by radiation. The hydrological footprint of an orbital data center is null.

The Carbon-Energy Feedback Loop

The terrestrial data center is tethered to the terrestrial grid. While major operators (Google, Microsoft, Amazon) purchase "Renewable Energy Certificates" (RECs) to claim carbon neutrality, the physics of the grid tells a different story. Electrons are fungible. When the wind stops blowing in Iowa, the data center in Iowa draws power from coal or natural gas peaker plants.

Furthermore, the efficiency of the terrestrial chain is poor. We lose energy at every step:

- Generation: ~40-50% efficiency for thermal plants.

- Transmission: ~5-8% loss in the high-voltage lines.

- Distribution: ~3-5% loss in local step-down.

- Conversion: ~5% loss in the PSU (Power Supply Unit) of the server.

- Cooling: An additional 10-40% energy penalty (PUE of 1.1 to 1.4) to remove the waste heat.

In contrast, the Orbital Data Center operates on a Source-to-Load model. The solar array (Generation) is wired directly to the compute rack (Load). There is no transmission grid. There are no peaker plants. The energy source is the fusion reactor at the center of our solar system (Sol), and the transmission medium is the vacuum of space, which has zero resistance to photons.

As noted by the Starcloud consortium (formerly Lumen Orbit), a data center in orbit could achieve "10x carbon-dioxide savings over the life of the data center" compared to a terrestrial equivalent, even when factoring in the significant carbon cost of the rocket launch.3 This is the efficiency of direct interception.

The Thermal Density Limit

We are running out of space to put the heat. The "Power Density" of a modern AI rack is approaching 100 kW per cabinet. Terrestrial buildings are struggling to move enough air or water through the facility to keep the chips from melting. We are reaching the limits of convective heat transfer.

The vacuum offers a different regime. It offers us the ability to design structures that are not constrained by gravity or wind load. We can deploy radiators the size of football fields, made of gossamer-thin graphite, to reject gigawatts of heat. We are not limited by the building code; we are limited only by the Stefan-Boltzmann law.

The Physics of the Void: Radiative Thermodynamics

The layman often objects: "But space is a vacuum! A vacuum is a thermos flask! How can you cool a computer in a thermos?"

This objection betrays a pedestrian understanding of heat transfer physics. There are three modes of heat transfer: Conduction, Convection, and Radiation. A vacuum flask prevents the first two. It does not prevent the third. In fact, in the absence of an atmosphere to scatter or absorb, radiation becomes the dominant and highly predictable mode of transport.

The Stefan-Boltzmann Law: The Governing Equation

The cooling power of an orbital radiator is governed by the Stefan-Boltzmann Law. For a Obsessive German Scientist, this equation is poetry. It states that the total power radiated per unit surface area is proportional to the fourth power of the thermodynamic temperature.

The equation is:

$$Q_{rad} = \epsilon \cdot \sigma \cdot A \cdot (T_{rad}^4 - T_{sink}^4)$$

Where:

- $Q_{rad}$ is the heat rejected (Watts).

- $\epsilon$ is the Emissivity of the radiator surface (a value between 0 and 1). We use high-emissivity coatings (like Z93 white paint or specialized ceramics) to drive this close to 0.95.

- $\sigma$ is the Stefan-Boltzmann constant ($5.67 \times 10^{-8} \, W \cdot m^{-2} \cdot K^{-4}$).

- $A$ is the Surface Area ($m^2$).

- $T_{rad}$ is the temperature of the radiator (Kelvin).

- $T_{sink}$ is the background temperature of deep space.

This is the critical advantage. On Earth, the "sink" temperature is the ambient air, often 313 K (40°C) in summer. In deep space, the sink temperature ($T_{sink}$) is the Cosmic Microwave Background, which is approximately 2.7 K.

This massive $\Delta T$ (Temperature Difference) allows for immense heat rejection efficiency. Furthermore, the $T^4$ term means that as we allow our chips to run hotter, the efficiency of the radiator skyrockets. A silicon chip operating at 350 K (77°C) is a potent emitter of infrared energy.

The Solar Flux and the View Factor

However, the void is not empty of energy. In Low Earth Orbit (LEO), a satellite is bathed in intense radiation from two sources:

- Direct Solar Flux ($G_s$): Approximately 1,360 W/m².4 This is the energy we harvest to run the computer.

- Planetary Albedo & IR: The Earth reflects sunlight (Albedo ~0.3) and emits its own infrared heat (Earth IR ~240 W/m²).

The engineering challenge, therefore, is Thermal Geometry. We must design the data center such that the solar panels face the Sun (Zenith/Sun-pointing) and the radiators face Deep Space (Anti-Nadir or orthogonal), while never allowing the radiators to see the Sun or the hot Earth.

This requires active attitude control and specific orbital selection (such as Sun-Synchronous Orbit or Dawn-Dusk orbits), where the satellite rides the terminator line, keeping the solar panels in constant light and the radiators in constant shadow.

Comparative Analysis: Earth vs. Orbit Cooling

Physical ParameterTerrestrial Data CenterOrbital Data CenterHeat Rejection ModeConvection / EvaporationRadiation (Photonic)Sink Temperature ($T_{sink}$)~290 - 320 K (Variable)~3 K (Constant)Cooling MediumAir / WaterVacuum / Graphite CompositeWater ConsumptionHigh (Evaporative Loss)Zero (Closed Loop)Thermal InertiaHigh (Concrete/Steel)Low (Lightweight Structures)Power Density LimitLimited by Airflow/PipingLimited by Radiator Area

As illustrated, the orbital environment offers a thermodynamically superior sink. The challenge is merely structural: deploying large enough radiator wings to handle the load. But in zero gravity, size is cheap.

The Silicon Substrate: Radiation Hardness and the COTS Revolution

For decades, the space industry operated under a dogma of fear regarding radiation. The assumption was that any electronic device placed in orbit would be instantly fried by the Van Allen belts unless it was "Radiation Hardened" (Rad-Hard).

This dogma produced chips that were incredibly robust, incredibly expensive, and incredibly slow. A "Space Grade" processor might cost $200,000 and offer the performance of a 1990s pocket calculator. This is unacceptable for AI training, which requires TFLOPS (Terra-Floating Point Operations Per Second).

The Google Research Breakthrough: Trillium

We must turn our attention to the groundbreaking empirical work conducted by Google Research on their Trillium TPU (v6e). They asked the forbidden question: "What happens if we just put a normal AI chip in a proton beam?"

The results were shocking to the traditionalists.

"No hard failures were attributable to TID up to the maximum tested dose of 15 krad(Si) on a single chip, indicating that Trillium TPUs are surprisingly radiation-hard for space applications." 6

This single sentence dismantles the primary technical barrier to orbital AI. 15 krad(Si) is a significant dose. In a typical Low Earth Orbit (LEO), with 4-5mm of aluminum shielding, the annual dose might be 1-2 krad. This implies that a standard, commercial TPU can operate for 5 to 7 years before radiation degradation sets in.

Mechanisms of Failure and Mitigation

There are two enemies in the radiation environment:

- Total Ionizing Dose (TID): The accumulation of protons and electrons trapped in the oxide layers of the transistor. This causes a threshold voltage shift ($V_{th}$). Eventually, the transistor cannot switch off (leakage) or cannot switch on.

- Mitigation: Shielding (Aluminum/Polyethylene) and "over-provisioning" the voltage margins.

- Single Event Effects (SEE): A single high-energy heavy ion passes through the silicon, depositing a track of charge. This can cause a Single Event Upset (SEU) (a bit flip) or a Single Event Latchup (SEL) (a short circuit).

- Mitigation: Software. Modern AI training is inherently probabilistic. If a weight in a neural network flips from 0.001 to 0.002, the model converges anyway. We do not need the perfection of a bank ledger; we need the statistical robustness of a brain. For Latch-ups, we use "Watchdog" circuits that cycle the power if current spikes.

The "Disposable Supercomputer" Paradigm

The implications of the Google study 6 are profound. It means we can use Commercial Off-The-Shelf (COTS) hardware. We can launch NVIDIA H100s or Google TPUs directly from the factory floor.

The obsolescence cycle of AI hardware is roughly 3-4 years (Moore's Law). The orbital lifetime of a LEO satellite at 500km is roughly 5-7 years. These cycles match perfectly. We do not need the chip to last 15 years; it will be obsolete trash in 5 years anyway. We launch it, burn it out at maximum performance, and let it de-orbit and burn up in the atmosphere, replaced by the next generation. It is a cycle of planned orbital obsolescence.

The Photonic Nervous System: Optical Inter-Satellite Links (OISL)

A data center is not just a collection of chips; it is a network. In a terrestrial cluster, servers are connected by copper cables and fiber optics with massive bandwidth. In space, we cannot run cables between satellites. We must use light.

The Laser Link Imperative

To achieve the performance of a unified supercomputer, the "Orbital Swarm" must utilize Optical Inter-Satellite Links (OISL). This technology uses focused laser beams to transmit data between satellites.

Current state-of-the-art, as demonstrated by Spire Global 7 and Starlink, achieves gigabits per second. This is insufficient for AI training, which requires Terabits. However, the physics of space is actually better for optics than fiber. In a fiber optic cable, light travels through glass ($n \approx 1.5$), suffering dispersion and attenuation. In the vacuum, light travels at $c$, with zero dispersion and zero attenuation.

Google's analysis 6 suggests that by using Dense Wavelength Division Multiplexing (DWDM)—sending multiple colors of laser light down the same beam path—and keeping satellites in tight formation (kilometers apart), we can achieve links of tens of Terabits per second.

"Delivering performance comparable to terrestrial data centers requires links between satellites that support tens of terabits per second. Our analysis indicates that this should be possible with multi-channel dense wavelength-division multiplexing (DWDM)..." 6

Latency vs. Throughput

Critics often cite latency as the fatal flaw. A signal to orbit and back takes time (20-40ms for LEO, 500ms for GEO). This makes "Real-Time Gaming" or "High-Frequency Trading" poor candidates for space hosting.

However, the primary use case for ODCs is Batch Processing:

- AI Training: Upload a massive dataset (Petabytes). The swarm computes for weeks. Download the model weights (Gigabytes). Latency is irrelevant; throughput is king.

- Scientific Simulation: Climate modeling, protein folding.

- Render Farms: CGI for movies.

In these applications, the "Space Computer" acts as a remote brain. You ask it a difficult question, and it takes its time to answer.

The Economic Singularity: The Starship Factor

For fifty years, the cost to lift a kilogram to orbit was approximately $10,000 to $20,000 (Space Shuttle era). At this price, launching a 20kg server rack chassis was financial suicide.

We are now witnessing the Launch Cost Singularity. The advent of the SpaceX Starship and other reusable heavy-lift vehicles is driving the cost down by orders of magnitude.

The Parity Calculation

Google’s internal analysis 6 suggests a "Parity Point" of roughly $200 per kg.

Let us perform the "Obsessive Scientist" calculation:

- Terrestrial Cost: The cost of electricity ($0.10/kWh) over 5 years for a 1kW server is ~$4,380. Add cooling, land, and building costs. Total ~ $6,000 - $8,000.

- Orbital Cost: A 1kW server might weigh 10kg (stripped of fans/casing). At $200/kg, launch cost is $2,000. The solar panels and satellite bus add perhaps another $2,000. Total ~ $4,000.

- Operational Cost: In orbit, the solar energy is "free" (pre-paid in CAPEX). There is no monthly utility bill.

When the cost to launch the power plant (solar panels) and the load (server) becomes lower than the cost to rent the power plant (grid) on Earth, the migration becomes inevitable. Capital flows like water to the lowest point of resistance.

Financial Validation: The Smart Money Moves

The market is already voting with its checkbook. We are seeing the emergence of "Space-Native" compute startups.

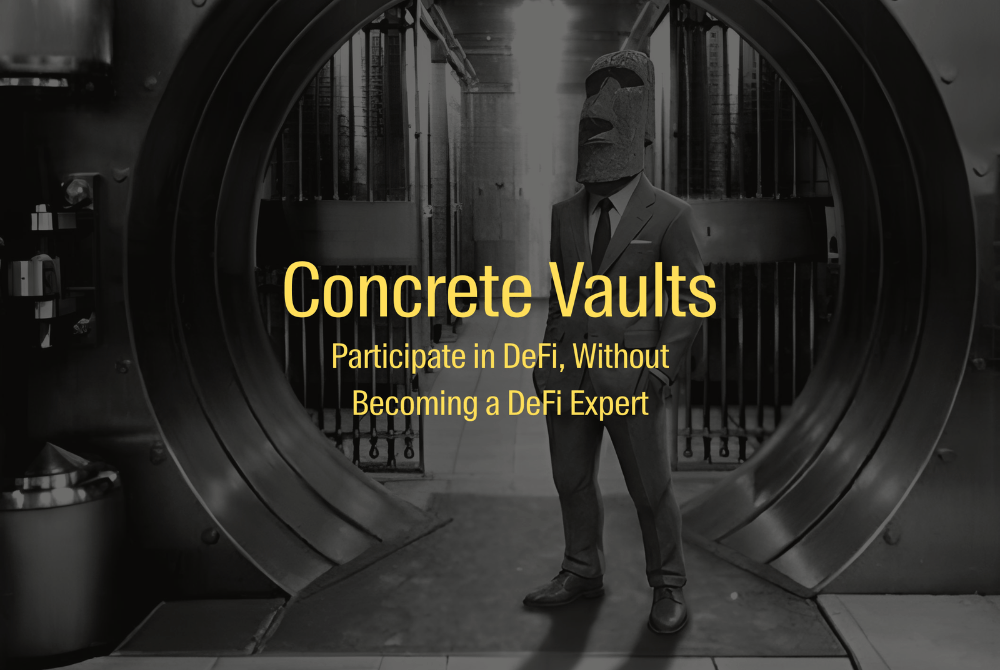

A prime example is SpaceComputer, which recently raised a $10 million seed round, as reported by The Defiant.1 This funding is not for generic space exploration; it is specifically for the deployment of a "SpaceTEE" (Trusted Execution Environment). This signals that the crypto and blockchain sectors—which value security and decentralization above all else—are the early adopters.

Similarly, Starcloud (formerly Lumen Orbit) has raised capital (approx $11M) to deploy NVIDIA GPUs in orbit.8 Their partnership with Axiom Space to place a data center on a commercial space station module by 2026 9 is a concrete step from theory to practice.

These are not science experiments. These are commercial prototypes.

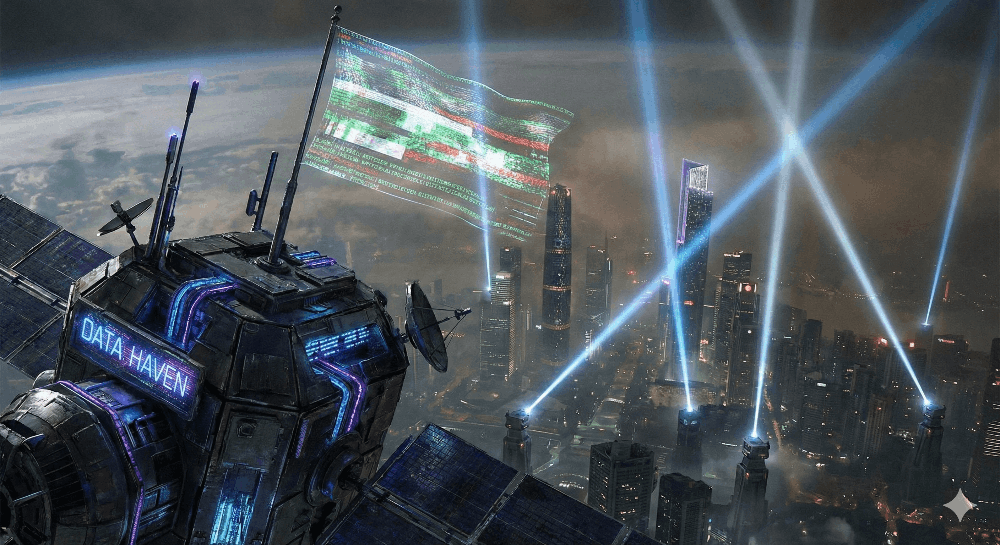

The Geopolitical Vacuum: Sovereignty and Law

Physics is not the only driver. Politics is a powerful accelerant. The terrestrial internet is becoming increasingly "splintered." The Great Firewall of China, the GDPR in Europe, the digital boundaries of the US—data is no longer free. It is fenced.

The "Data Haven" Theory

Space occupies a unique legal niche. Under the 1967 Outer Space Treaty (OST), space is "the province of all mankind" and not subject to national appropriation.10 However, the State that launches an object retains "jurisdiction and control" over that object (Article VIII).

This creates a paradox that companies like SpaceComputer are exploiting. They market their SpaceTEE as offering "Censorship Resistance" because the node is "Not subject to the jurisdiction of a single country, difficult to be physically attacked or shut down".1

Imagine a server hosting a controversial WikiLeaks archive, or a decentralized finance (DeFi) protocol that violates SEC regulations. If that server is in a data center in Virginia, the FBI raids it. If it is in a satellite at 500km altitude, launched by a shell company in a neutral jurisdiction, who raids it? To physically access it requires a space program. To destroy it with a missile is an act of war and creates debris that threatens everyone's satellites.

The New "High Seas"

We are effectively recreating the "High Seas" legal regime in orbit. Just as ships fly "Flags of Convenience" (Panama, Liberia) to avoid taxes and regulations, future Data Centers will likely fly the flags of nations with permissible digital laws. We may see "Data Havens" launched under the flag of Luxembourg or the Isle of Man, offering "Orbital Hosting" that is immune to terrestrial subpoenas.11

There is currently no international treaty specifically governing data privacy in space.11 This vacuum is a feature, not a bug, for the "Crypto-Anarchist" demographic driving the early SpaceComputer adoption.

The Existential Risk: The Kessler Trap

However, we must not be blind to the catastrophic risks. The "Obsessive Scientist" knows that there is no free lunch. The cost of orbital occupancy is the risk of orbital ruin.

The Debris Cascade

The Kessler Syndrome describes a scenario where the density of objects in LEO becomes high enough that collisions between objects cause a cascade—each collision generating thousands of shrapnel pieces, which then cause more collisions.12

Data Centers are problematic because they are large. To cool a megawatt of compute, we need acres of radiators. To power it, we need acres of solar panels. These structures have a massive "cross-sectional area." They are giant targets.

The 2024 breakup of the Chinese Long March 6A rocket, which created 700+ trackable fragments in the critical 700-800km altitude band, serves as a grim warning.13 If a piece of debris the size of a marble (1 cm) hits a data center radiator at 14 km/s (hypervelocity), it releases energy equivalent to a hand grenade. It would shatter the cooling loop, freeze the computer, and create a cloud of graphite and coolant droplets that would threaten the entire "Orbital Cloud."

The Worst Case: Orbital Lock-in

If we populate the orbit with thousands of massive data centers without strict regulation—without "Active Debris Removal" (ADR) and autonomous collision avoidance—we risk sterilizing the near-Earth environment. A full Kessler cascade would create a shell of debris that would prevent any launch for centuries. We would be trapped on Earth, with our hot computers, unable to leave.12

Case Studies: The Pioneers of the Void

The landscape is already populating with divergent approaches.

SpaceComputer: The Cryptographic Fortress

SpaceComputer 1 focuses on the SpaceTEE. Their thesis is not just "cheap power" but "absolute trust." By running code in a trusted execution environment in space, they eliminate the "Oracle Problem" in blockchain. If a satellite says "The temperature in New York is 20°C," you can cryptographically verify that the signal came from the satellite and was not tampered with. This is high-value, low-volume compute.

Starcloud (Lumen Orbit): The Brute Force

Starcloud 8 is pursuing the "Brute Force" AI market. Their "Starcloud-1" mission aims to qualify the NVIDIA H100 GPU for space. Their vision is scaling to gigawatts. They represent the "Industrial" approach—using space as a power plant.

Thales Alenia Space (ASCEND): The Sovereign Cloud

The ASCEND project 16, funded by the European Commission, represents the "State" approach. They are studying the feasibility of assembling massive data centers in orbit using robotics, specifically to meet the EU's Green Deal goals. They view ODCs as a strategic infrastructure to ensure Europe's "Digital Sovereignty" and carbon neutrality by 2050.

The 50-Year Horizon: 2075 Predictions

As a scientist, I extrapolate the curves. Where do we end up?

The Best Case: The "Type 0.9" Civilization

By 2075, the "Terrestrial Cloud" is a memory.

- The Orbital Ring: A visible band of glittering structures encircles the Earth. These are the "Compute Reefs"—modular, self-assembling swarms of data centers.

- Energy Balance: Humanity has achieved "Net Zero" not by austerity, but by export. We export our entropy (heat) to space. The planetary energy budget is balanced.

- The Dyson Precursor: We are harvesting 1% of the total solar flux intercepting the Earth system, solely for computation. We are on the verge of becoming a Kardashev Type I civilization.18

- AI Symbiosis: The global AI resides in the cold vacuum, thinking deep thoughts, communicating with the surface via neutrino beams or terabit lasers.

The Worst Case: The Kessler Cage

By 2075, the sky is grey with aluminum dust.

- The Event: The "Great Collision of 2038," where a rogue ASAT test hit a massive commercial data center, triggering a chain reaction.

- The Consequence: No satellites exist below 2,000 km. GPS is gone. Weather forecasting is gone.

- The Heat Death: Trapped on the surface, humanity continued to build terrestrial data centers. The heat load, combined with climate change, has made the equator uninhabitable. We are burning the last of the coal to run the cooling towers to train the AIs to tell us how to survive the heat. It is a thermodynamic suicide pact.

The Ascent is Mandatory

The analysis leads to a singular conclusion. The Orbital Data Center is not a luxury. It is a thermodynamic valve.

Civilization is a heat engine. As we transition to a "Cognitive Economy," our heat output is shifting from mechanical (cars, factories) to computational (servers). The biosphere cannot absorb this heat. The vacuum can.

We have the rockets (Starship). We have the chips (Trillium/H100). We have the lasers (OISL). The only variable remaining is the will to execute the migration before the debris field closes the door.

We must ascend. To stay is to stagnate, and eventually, to boil.

Data Tables

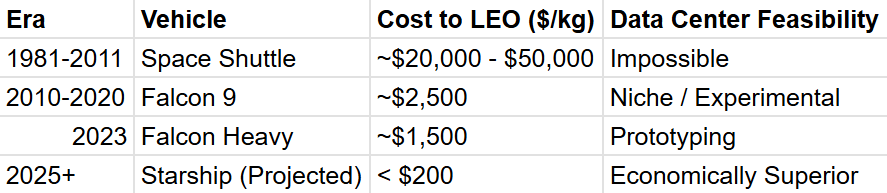

Table 1: The Launch Cost Singularity (Comparison of historical launch costs vs. projected Starship costs)

Table 2: Environmental Impact Analysis (Terrestrial vs. Orbital Resource Consumption)

Learn more

Sources Used In the Article

SpaceComputer raises 10 million! The science fiction of space satellites running on the blockchain becomes a reality. | MarketWhisper on Gate Square

AI's Cooling Problem: How Data Centers Are Transforming Water Use

How Starcloud Is Bringing Data Centers to Outer Space - NVIDIA Blog

Opens in a new window

Thermal Design for Spaceflight

Correlation Between Temperature and Radiation - Climate Science Investigations South Florida - Energy: The Driver of Climate

Exploring a space-based, scalable AI infrastructure system design - Google Research

Spire Achieves Two-Way Laser Communication Between Satellites in Space

Starcloud: Data centers in space - Y Combinator

Lumen Orbit, a Seattle-area startup that wants to put data centers in space, raises $11M

The Outer Space Treaty - UNOOSA

Exploring the Legal Frontier of Space and Satellite Innovation - Morgan Lewis

Kessler syndrome - Wikipedia

The Kessler Syndrome - KeepTrack

Kessler's syndrome: a challenge to humanity - Frontiers

SpaceComputer: Building the Orbital Root of Trust

Lower emissions and reinforced digital sovereignty: the plan for datacentres in space

ASCEND: Thales Alenia Space to lead European feasibility study for ...

Kardashev scale - Wikipedia

Lumen Orbit changes its name to Starcloud and raises $10M for space data centers