The Quantum Apocalypse is coming…but not in the way you think

If you’ve spent any time scrolling through tech news lately, you’ve probably seen the headlines. Quantum computing is usually portrayed as this mystical, glowing monolith that’s going to solve world hunger on Tuesday and break all of human privacy by Wednesday. It’s the ultimate deus ex machina of the silicon world. But here’s the thing, we’re currently in 2026 and the Quantum Apocalypse hasn’t quite arrived. At least not in the way the sci-fi movies promised.

Instead, we’ve landed in a bit of a weird hybrid era. It’s less like replacing your laptop with a warp drive and more like adding a very temperamental, sub-zero turbocharger to a classic car. We’re living in the age of NISQ (Noisy Intermediate-Scale Quantum), where the hardware is finally powerful enough to do things classical computers find impossible, but also noisy enough to make a lot of mistakes. Think of it as a genius mathematician who can calculate the secrets of the universe but occasionally forgets how to carry the one because someone in the next room sneezed.

We Aren’t Replacing PCs (Yet)

Let’s clear something up right away. Your next MacBook isn’t going to have a quantum chip. I know, I’m disappointed too. But the reason is purely physical. Despite the massive 2026 milestones from heavyweights like IBM and Google, these chips are still incredibly high-maintenance divas. They have to be kept at temperatures colder than outer space just to keep their qubits from decohering, which is basically quantum-speak for getting distracted by a stray vibration and losing all their data.

In 2026, we’ve realized that quantum computers aren’t general-purpose machines. They are the world’s most expensive specialized co-processors. We’re seeing a hybrid model take over where Nvidia is using AI to accelerate quantum error correction, helping these noisy chips finally become useful. In fields like drug discovery, researchers use their regular classical computers to handle the data management, then offload the nightmare-level molecular simulations to a quantum chip. It’s a tag-team effort. We’re finally seeing these accelerators tackle things like finding new catalysts for carbon capture. Tasks where close enough is actually a massive breakthrough, even if the machine still makes the occasional quantum hiccup.

Measuring Success Beyond Just Qubits

For years, we were obsessed with qubit counts. It was the megahertz race of the 2020s. But in 2026, the industry has shifted its focus to a much more honest metric. QuOps (error-free Quantum Operations). As the experts at Riverlane have pointed out, a million noisy qubits are useless if they can’t finish a calculation before collapsing.

This shift is huge because it means we’ve stopped trying to build a magic box and started doing real engineering. We are now seeing the first Fault-Tolerant Quantum Computers (FTQCs) emerge. Small systems that use error-correcting codes to protect information. It’s the difference between a paper plane and a Wright brothers’ glider. It’s not a 747 yet, but it’s staying in the air. Companies are now focusing on a generational roadmap (KiloQuOp, MegaQuOp, and eventually GigaQuOp) giving us a clear path to when these machines will actually outperform a supercomputer on a consistent basis, starting with MegaQuOp systems for specialized tasks and moving toward GigaQuOp for broader commercial applications.

The Ghost in the Machine

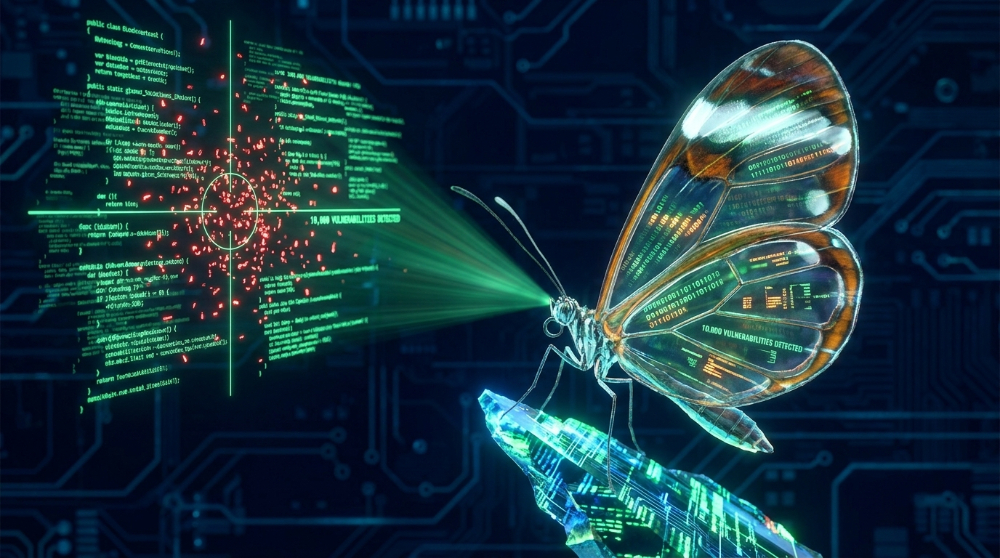

Now, let’s talk about the part that keeps security experts awake at night. You might think, “If quantum computers are still noisy and error-prone, my encrypted emails are safe, right?” Well, yes, for today. But there’s a massive but coming. Enter the HNDL strategy, Harvest Now, Decrypt Later. This isn’t just a spy thriller plot either, it’s an active intelligence strategy being used right now.

Imagine a bad actor vacuuming up terabytes of your encrypted data today (bank records, government cables, trade secrets) and just… sitting on it. They can’t read it yet, but they’re betting that in 10 to 15 years, a stable quantum computer will exist that can run Shor’s Algorithm. Once that happens, they can crack open those old files like a digital time capsule. According to recent expert surveys, there is a meaningful probability of a cryptographically relevant quantum computer appearing by the mid-2030s. If your data needs to stay secret for decades, it’s effectively sitting on a shelf with a countdown timer attached to it.

The Death of RSA and the Rise of the Shield

We’ve reached the point where the end of encryption isn’t a math theory anymore, it’s a massive logistics headache. Our current asymmetric encryption (the stuff that keeps the green padlock on your browser) is built on the assumption that factoring giant prime numbers is hard. To a classical computer, it’s impossible. To a stable quantum computer, it’s a light snack.

The good news? The counter-offensive is finally here. 2026 is a major turning point because the wait and see approach is officially dead. Agencies like CISA are now providing explicit guidance for transitioning to Post-Quantum Cryptography (PQC), specifically prioritizing the ML-KEM standard for secure data exchange. These are new, lattice-based math standards designed to be quantum-resistant. The big buzzword right now is crypto-agility. Companies are realizing they can’t just set their encryption and forget it for twenty years. They need systems that can swap out algorithms as easily as you’d update an app on your phone. It’s a race against time really. Can we re-encrypt the world’s most sensitive infrastructure before the harvest now crowd gets their hands on a stable QPU?

More Than Just Fast Downloads

While everyone is worried about breaking codes, there’s a whole other side of the story that’s actually pretty hopeful, the Quantum Internet. In 2026, we aren’t just building computers, we’re starting to build the networks that connect them. This isn’t about watching 8K Netflix, it’s about Quantum Key Distribution (QKD).

The Quantum Internet allows us to send information in a way that is physically impossible to eavesdrop on without being detected. Because of the laws of physics, if someone tries to observe a quantum signal in transit, the signal changes, and the sender and receiver instantly know they’ve been compromised. We are seeing testbeds for this technology popping up in places like Maryland and India, laying the groundwork for a future where communication isn’t just encrypted by math, but protected by the fundamental laws of the universe.

The Fault-Tolerant Horizon

So, where does that leave us? Honestly, we’re in the vacuum tube era of quantum computing. It’s messy, it’s expensive, it requires a small army of Ph.D.s to keep it running, and it’s prone to breaking if you look at it wrong. But the transition from NISQ to true fault-tolerant computing is the next big milestone. We’re moving away from just more qubits and focusing on better qubits that can correct their own mistakes.

The next few years won’t be about a single eureka moment that changes the world overnight. Instead, it’ll be a slow, steady grind of improving error correction and scaling up. The magical super-processor is still on the horizon, but the practical quantum accelerator is already in the room. The real question for the rest of us isn’t whether quantum will arrive, it’s whether we’ll have our digital shields up in time to meet it. It’s a weird, exciting, and slightly terrifying time to be online, but hey, that’s just another Tuesday in tech I guess.

Thanks for reading everyone! Visit my site to learn more about me and explore what I’m building at Learn With Hatty. I hope everyone has a great day and as I always say, stay curious and keep learning.