From Profit to Penalty: Jury Slams Meta with $375M for Endangering Kids

🚨 Landmark Verdict: Jury Finds Meta Knowingly Harmed Children for Profit – What This Means for Our Kids

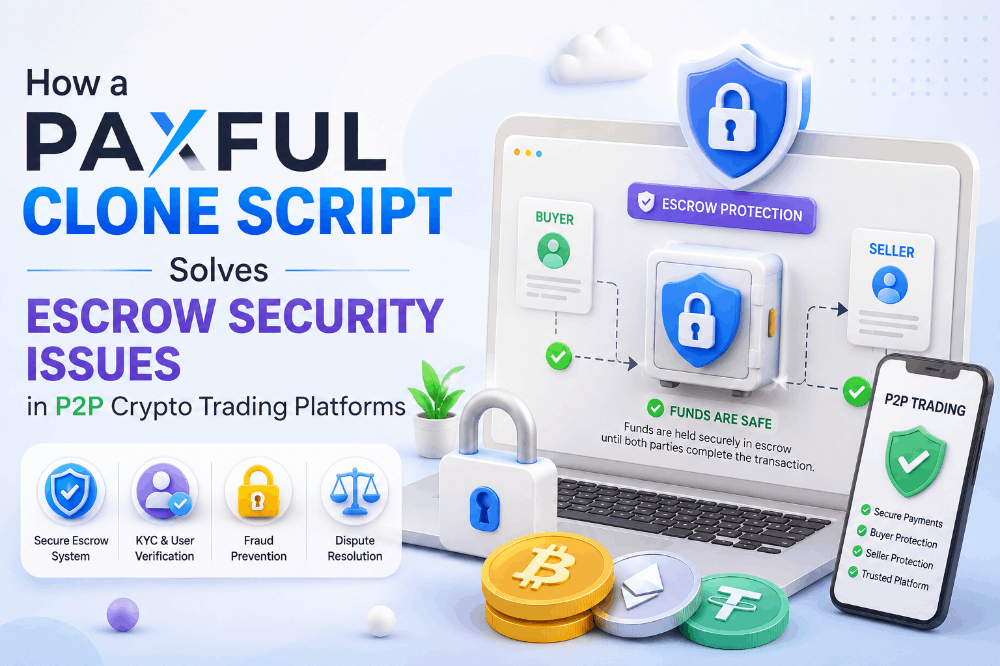

This week, juries delivered historic blows to Meta. In New Mexico, the company was ordered to pay $375 million for violating consumer protection laws — accused of misleading the public about platform safety while knowingly exposing children to sexual exploitation, predators, and harmful content on Instagram, Facebook, and WhatsApp.

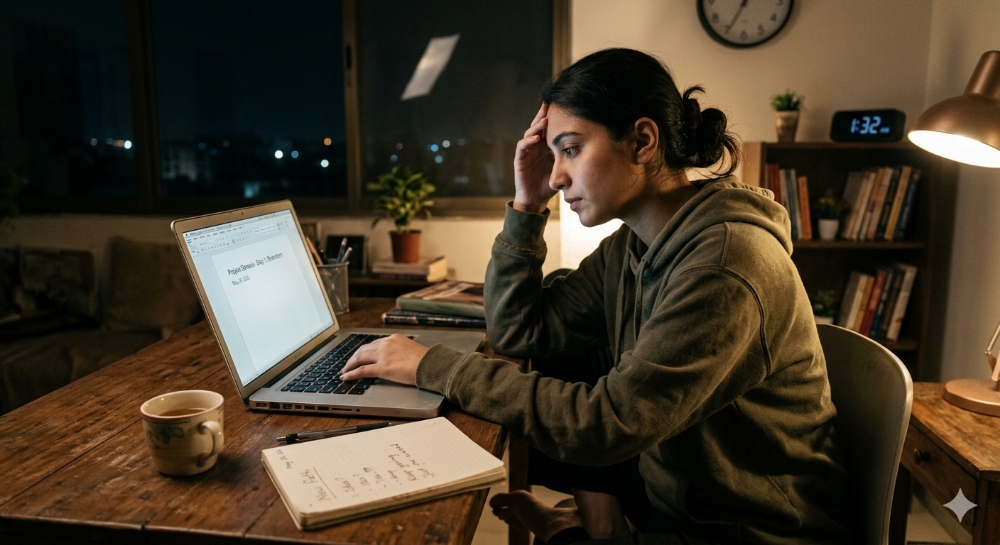

In Los Angeles, another jury ruled Meta (and YouTube) liable in a bellwether case brought by a now 20-year-old woman (known as Kaley or K.G.M.). She testified that addictive features — infinite scroll, autoplay, hyper-personalized algorithms — hooked her before age 10, fueling anxiety, depression, body dysmorphia, and suicidal thoughts. The companies were found negligent for designing platforms “as addictive as cigarettes or digital casinos.” Damages: at least $6 million combined in that case, with thousands more lawsuits pending from families, school districts, and states.

The impact on children is devastating and well-documented. Internal Meta research (leaked years ago) reportedly showed Instagram worsening body image issues, especially among teen girls. Features engineered for maximum engagement keep kids scrolling for hours, disrupting sleep, self-esteem, and real-world relationships. Schools report rising mental health crises, attention deficits, and social withdrawal directly linked to heavy social media use. Predatory grooming and explicit content exposure have surged, turning platforms meant for connection into minefields for the vulnerable.

This isn’t just about one company. It’s a wake-up call for the entire industry. For years, Big Tech prioritized growth metrics and ad revenue over child safety. Age verification was weak, reporting tools slow, and profit motives often trumped protection. Parents watched helplessly as algorithms pushed harmful content to young users.

The verdicts signal accountability is finally arriving. They could reshape product design — forcing better parental controls, reduced addictive features for minors, stricter moderation, and transparency about internal research. Appeals are expected, but the precedent is set: knowingly harming children for profit carries consequences.

As parents, educators, and society, we must do more. Limit screen time, use family controls, teach digital literacy, and demand better from platforms. In places like Lagos, where smartphone access is exploding among youth, these issues hit hard — our children deserve safer digital spaces.

This moment isn’t the end; it’s the beginning of real reform. Tech should serve humanity, not exploit developing minds. What changes do you want to see? Stronger age gates? Algorithm transparency? Better mental health safeguards?

Parents and guardians — share your experiences below. Let’s push for a healthier internet for the next generation. 👇

#MetaLawsuit #ProtectOurKids #SocialMediaAddiction #ChildMentalHealth