What Are the Hidden Risks of Using AI in Enterprises?

Artificial intelligence is reshaping how enterprises operate. Faster decisions, smarter automation, competitive advantages that were unimaginable five years ago.

But with speed comes risk. Most enterprises focus on what AI can deliver. Far fewer are asking the harder question: what is AI doing to our data, our compliance posture, and our legal exposure while we are busy being impressed by the results?

The hidden risks of enterprise AI are real, active, and compounding. Here is what every business leader and technical team needs to understand before the next deployment.

Risk 1: Sensitive Data Is Leaving Your Environment Without You Knowing

When a team member pastes a client contract, financial record, or internal report into a mainstream AI tool, that data does not stay inside your organisation. It travels to a third-party provider’s servers. Depending on the plan and configuration, it may be logged, stored, or used for model training.

Most employees are not being careless. They are doing what the workflow requires. The problem is that most AI tools were built for convenience, not for the sensitivity level of enterprise data.

The fix is architectural. A “privacy-first AI tool” anonymises sensitive data before it reaches the model. Identifying information is stripped at the pre-processing layer. The model works on a clean version. The raw data never crosses the organisational boundary.

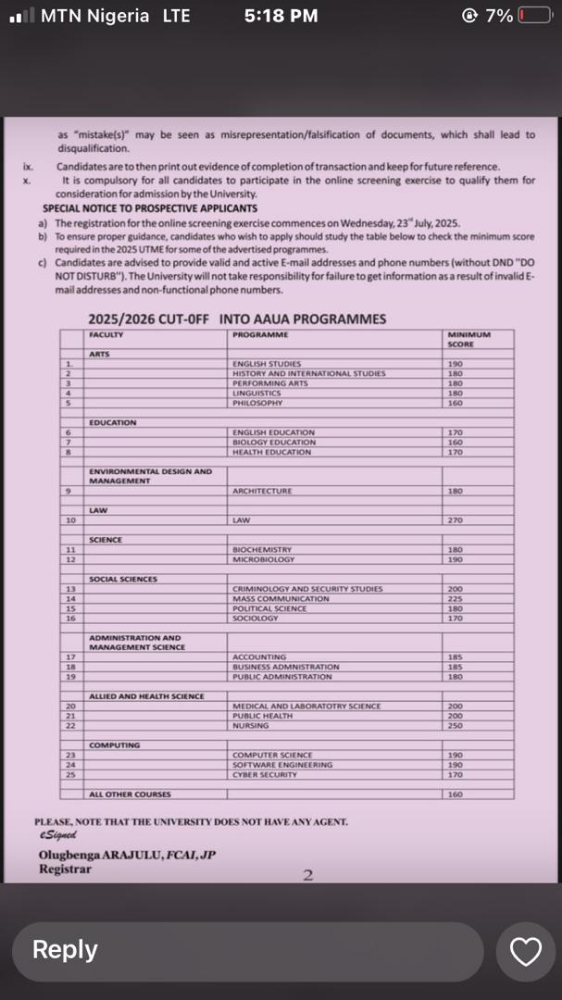

Risk 2: EU AI Act Compliance Is Not Optional Anymore

The EU AI Act is active and enforcing. For enterprises operating in or serving EU markets, this is not a future concern — it is a current legal obligation with real enforcement timelines.

The August 2026 deadline for Annex III high-risk AI systems covers AI used in financial services, hiring, healthcare, education, and critical infrastructure. Non-compliance carries fines of up to €15 million or 3% of global annual turnover. Ignorance of the classification is not a defence.

Compliance requires a continuous risk management process, documented data governance, detailed technical records, and human oversight mechanisms. These are architecture requirements, not policy documents. Most enterprises have the policy. They are missing the architecture.

Risk 3: Your AI Governance Gap Is Bigger Than You Think

Most organisations have an AI policy. Very few have AI governance. The difference is significant.

A policy states intent. Governance is what your AI infrastructure actually does. When data flows through your AI systems right now — is it being anonymised before inference? Is there an auditable record? Can you demonstrate to a regulator or enterprise client that your controls are real and operational?

Cyber insurers are now requiring documented AI data controls as part of underwriting. Enterprise procurement teams are sending AI security questionnaires before signing contracts. The governance gap is no longer invisible. It is showing up in insurance renewals, procurement deals, and regulatory audits.

Risk 4: LLM Vendor Lock-In Is a Long-Term Strategic Risk

When AI workflows are built directly into a single LLM provider’s platform without a privacy layer in between, you are not just using that provider. You are dependent on their pricing decisions, their policy changes, their model deprecations, and their terms of service.

An LLM anonymizer layer — a pre-processing pipeline that strips sensitive data before inference and is compatible with any model — solves this. Your governance controls travel with you when you switch providers. Your data sovereignty is preserved regardless of which model you are routing to.

Provider independence is not just a technical preference. For enterprises where AI is becoming embedded in critical workflows, it is a long-term strategic requirement.

Risk 5: Explainability Failures in High-Stakes Decisions

AI tools that influence credit decisions, hiring, risk pricing, or clinical recommendations carry a distinct category of risk. When those decisions are challenged — by a client, a regulator, or in court — you need to explain how the model reached its conclusion.

Under GDPR Article 22, individuals have the right not to be subject to purely automated decisions. Under the EU AI Act Article 14, human oversight mechanisms are a technical obligation for high-risk systems. These are not soft requirements. They are enforceable obligations with documented consequences for non-compliance.

Building explainability and human-in-the-loop review into AI architecture from day one is significantly easier than retrofitting it after a regulatory inquiry makes it mandatory.

The Architecture That Manages All Five Risks

The best privacy AI tool for enterprise use is not the one with the highest benchmark scores. It is the one that makes these five risks manageable by design.

Anonymisation before inference. Provider-independent architecture. Auditable data flows. Human oversight built into high-risk workflows. And governance that is demonstrated at the technical level, not just stated in a policy document.

This is the architecture Questa AI was built around. Their upload, anonymise, and analyse pipeline is designed for enterprises in financial services, legal operations, healthcare, and compliance-heavy industries that need AI to be genuinely capable and genuinely safe at the same time.

Conclusion: The Hidden Risks Are Preventable

From real-world experience in financial services and regulated enterprise environments, the pattern is consistent. Data exposure, regulatory gaps, governance failures, vendor lock-in, and explainability weaknesses are all preventable. But they require deliberate architectural decisions made early — not retrofitted after an audit forces the issue.

The organisations that are scaling AI confidently right now are the ones that treated these risks as design requirements, not compliance afterthoughts. The window to get ahead of them is still open.

If your organisation is evaluating AI infrastructure and wants to understand what privacy-first, governance-ready AI looks like in practice, visit questa-ai.com or book a consultation with the team. The conversation is worth having before the risks you cannot see become the problems you cannot ignore.