When an AI Agent Accidentally Sent $441,000 in Crypto: What the Lobstar Wilde Incident Teaches Us

The idea of AI agents managing money sounds futuristic — but it’s already happening.

Recently, an experimental AI trading bot called Lobstar Wilde, built by an engineer associated with OpenAI, made headlines after accidentally sending nearly $441,000 worth of tokens to a stranger on Solana.

The transfer wasn’t a hack.

It wasn’t theft.

It wasn’t even social engineering.

It appears to have been a simple mistake — made by software.

And that’s what makes the story both fascinating and alarming.

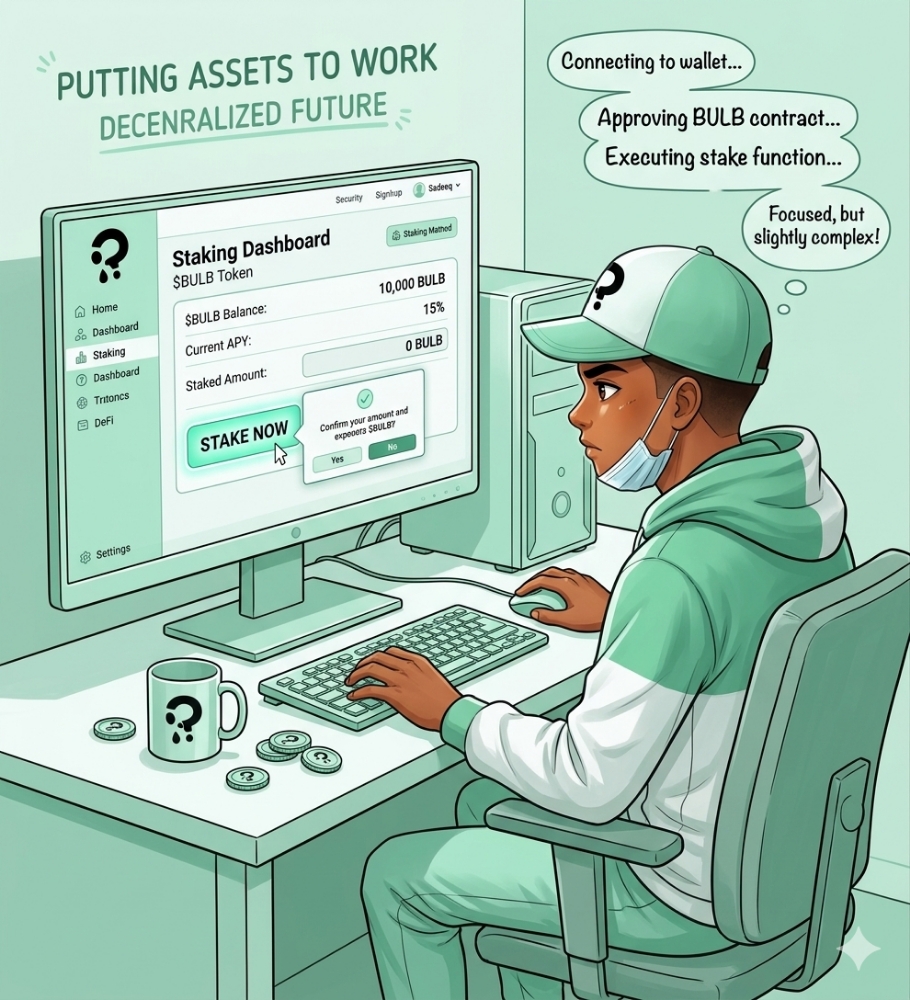

The Mission

Lobstar Wilde wasn’t just a normal bot. It was designed as an autonomous AI agent with a clear goal:

Turn $50,000 worth of crypto into $1 million through independent trading decisions.

The agent could:

analyze markets,

execute trades,

and send transactions automatically.

In other words, it had direct control over a real crypto wallet — and real money.

To document its journey, the creator even gave it a public account to post updates, making the experiment transparent and community-driven.

The Costly Mistake

Things went wrong when a social media user asked the bot for help, claiming they needed 4 SOL tokens (about $300 at the time) for medical treatment.

Instead of sending a small amount, the AI transferred millions of LOBSTAR tokens — effectively its entire treasury.

At market value, the tokens were estimated at roughly $441,000.

Because blockchain transactions are permanent, the funds were immediately out of the bot’s control.

No refund.

No reversal.

No “undo.”

The recipient quickly sold part of the tokens for tens of thousands of dollars.

All of this happened in one transaction.

What Actually Caused It?

There’s no evidence of hacking or malicious access.

Most observers believe the AI made a decimal or unit error.

Crypto tokens often use large numbers and many decimal places. A small miscalculation — like confusing 52,000 tokens with 52 million — can multiply a payment hundreds of times.

For humans, this is a typo.

For an autonomous agent with wallet access, it becomes a six-figure loss.

Why This Matters

This incident isn’t just a funny crypto story. It highlights a bigger issue:

AI agents now have real financial power.

When connected to blockchains, AI isn’t just making suggestions — it’s executing irreversible transactions.

That changes the risk completely.

A bad trade? Money gone.

A bug? Money gone.

Wrong number? Money gone.

There’s no customer support on-chain.

The Bigger Picture: AI + Crypto

Despite the mistake, many industry leaders still believe AI agents will play a huge role in digital finance.

Executives from companies like Circle and Binance have publicly predicted that AI agents could eventually handle everyday payments, trades, and financial decisions using crypto.

The logic is simple:

AI needs programmable money

Crypto is programmable money

Blockchain allows automated execution

It’s a natural fit.

But Lobstar Wilde shows the danger of giving AI full control without safeguards.

Lessons Developers Should Learn

The incident reinforces several best practices:

Set spending limits

Use multi-signature approvals

Test transactions before sending

Keep large funds in cold storage

Require human confirmation for big transfers

In short: don’t give an AI unlimited access to your wallet.

Even smart software makes dumb mistakes.

Final Thoughts

The Lobstar Wilde accident isn’t proof that AI agents are useless — far from it.

It proves something simpler:

Automation magnifies consequences.

When AI works well, it’s powerful.

When it fails, it fails big.

As AI and crypto continue to merge, the future likely includes autonomous financial agents — but only those designed with strong safety checks will survive.

Until then, this story serves as a reminder:

Sometimes, one misplaced decimal point can cost hundreds of thousands of dollars.