Ljubljana Reveals Its Secrets

Solving Ljubljana’s rental prices using AI

Like most students, we faced the issue of finding the right apartment during our studies. Not just the right one, the optimal one. The question that arose was:

“What is the best possible apartment we could find given our budget?”

Photo by Bram van Geerenstein on Unsplash

For all foreigners, you are seeing the sights of the city center of the Slovenian capital of Ljubljana — the cornerstone of culture, politics, and education.

Web scraping with lxml in Python

Web scraping a web page involves fetching it and extracting data from it. Once fetched, the extraction can take place. The content of a page may be parsed, searched, reformatted, its data copied into a spreadsheet, and so on. Web scrapers typically take something out of a page, to make use of it for another purpose somewhere else. Data was scraped and with little processing, we managed to get a fully compatible CSV file.

Full code and data can be found on GitHub.

The data we got had merely 4000 samples. New offers are being uploaded to the website each day, so we came up with a way to keep scraping the data from the site at all times. A bash script was instantiated and our powerful little guy starts the script every night at 3 AM that automatically scrapes the data and appends it to the CSV file. Python logs the number of new rows to a txt file. Raspberry works independently and is accessible via SSH.

Data Preprocessing

Data will be handled by pandas. There’s quite a lot to be done, so let’s cut to the chase. Note that we are using a different delimiter for the read_csv function rather than the default comma. All data types are still defined as objects. At this time, we are holding 4400 data rows in memory. Hopefully Raspberry picks up enough data so that someday we’ll be able to manage a much larger dataset. Let’s display the head of the data frame. Keep in mind that some words and phrases are in Slovene. Don’t let that bother you :) Picture By Author

Picture By Author

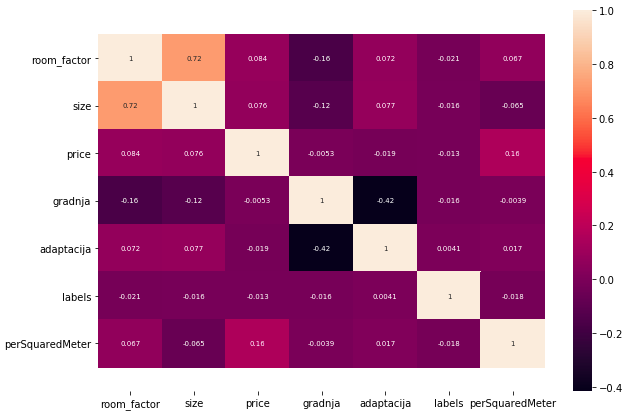

Room factor

First up is the room factor. The data comes in as a string summed up from the flat description and will require some handling. There are 13 different unique inputs. We have to change the values by hand. Lastly, let’s convert the column to a numeric one by calling pandas’ to_numeric on the room factor column. These are now integers and will be of crucial significance to the model.

Location

We knew the obvious parameter when trying to predict the prices in the city was the location. What was not so obvious, was how to feed it to the model, so that the output would be correlated with it. After quite some time of solving python errors, this is what we’ve come up with.

GeoPy converts the given strings to locations from which we would be able to get the latitude and longitude and apply the K-Means clustering algorithm to define 10 clusters and add the K-Means labels accordingly so that each label corresponds with the clustered index.

Now, we can drop both the latitude and the longitude, as well as the location description, as we won’t be needing them anymore.

The size and price column were simple enough. Using pandas’ str notation we got rid of the units and converted the column to numerical once again.

The last two parameters indicate the year of construction and the year of renovation. They seem to have a lot of null values. Using the fillna with the ‘pad’ method on the sorted columns fixed that.

As it turns out, another useful thing to do was to subtract the year of construction and renovation from the current year [2020]. This now suggests how many years ago either the construction or the renovation of the building was done. This along with normalizing will substantially help with the loss metrics.

Splitting the data

We are specifying 10% of the data to serve as the test set. The dataset is narrowed down from the original 7 columns to 5. As you’ll see later, 10% of the training data will be spent on validation.

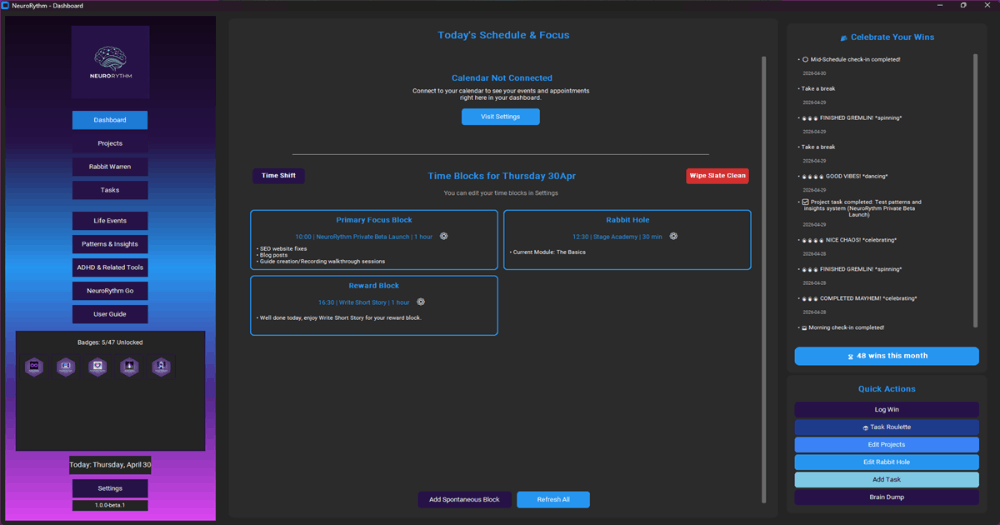

Hyperparameter tuning

Tuning machine learning hyperparameters is a tedious yet crucial task, as the performance of an algorithm can be highly dependent on the choice of hyperparameters. Manual tuning takes time away from important steps of the machine learning pipeline like feature engineering and interpreting results. Grid and random search are hands-off, but require long run times because they waste time evaluating unpromising areas of the search space. Increasingly, hyperparameter tuning is done by automated methods that aim to find optimal hyperparameters in less time using an informed search with no manual effort necessary beyond the initial set-up.

This tutorial will focus on the following steps:

- experiment setup and HParams summary

- Adapt TensorFlow runs to log hyperparameters and metrics

- Start runs and log them all under one parent directory

- Visualize the results in TensorBoard’s HParams dashboard

We will tune 4 hyperparameters (the dropout rate, the optimizer along with the learning rate, and the number of units of a specific Dense layer). TensorFlow makes it easy with the Tensorboard plugins. There’s a lot of code, but to keep it simple, essentially what we’re doing is iterating over the intervals of our 4 hyperparameters and optimal hyperparameters will be stored when the model reaches the highest accuracy. We’re also keeping a log of everything and the Tensorboard callback makes sure all the information will be visible in Tensorboard. Run function stores the metrics and keeps a log of every evaluation of the hyperparameters.

And this is the section where it all comes together. A massive for loop iterates over all the values and keeps a log based on the model’s evaluation of specific hyperparameters.

The greatest combination of hyperparameters is stored in a dictionary called hparams and they are an absolutely valid form to ingest to a model. If you think about it, the hyperparameter tuning could by itself be a concrete model. I decided to build the model again, including the best hparams, but with a substantially larger amount of epochs. There was no need to overkill hyperparameter tuning with the same number of epochs, as all we needed were parameters that give the best result.

According to hyperparameter tuning, the optimal parameters are 64 units, 0.3 for the dropout rate, stochastic gradient descent as the optimizer, and a learning rate of 0.001.

session_num = 0

for num_units in HP_NUM_UNITS.domain.values:

for dropout_rate in (HP_DROPOUT.domain.min_value, HP_DROPOUT.domain.max_value):

for optimizer in HP_OPTIMIZER.domain.values:

for learning_rate in HP_LEARNING_RATE.domain.values:

hparams = {

HP_NUM_UNITS: num_units,

HP_DROPOUT: dropout_rate,

HP_OPTIMIZER: optimizer,

HP_LEARNING_RATE: learning_rate,

}

run_name = "run-%d" % session_num

print('--- Starting trial: %s' % run_name)

print({h.name: hparams[h] for h in hparams})

history = run('logs/hparam_tuning/' + run_name, hparams)

session_num += 1Regularization

We utilized two methods of regularization, and let’s take a brief look at them.

- Dropout

- is a regularization technique that prevents complex co-adaptations on training data by randomly dropping neuron weights. With a dropout rate of 0.2, 20% of the neurons’ weights are set to 0. Therefore not doing anything.

- L1 or Lasso Regression adds “absolute value of magnitude” of coefficient as a penalty term to the loss. It shrinks the less important features to even lower.

- The Huber loss is a loss function used in robust regression, that is less sensitive to outliers in data than the squared error loss. This function is quadratic for small values of a and linear for large values.

- Source: WikiAs you can see, for target = 0, the loss increases when the error increases. However, the speed with which it increases depends on this 𝛿 value. In fact, Grover (2019) writes about this as follows: Huber loss approaches MAE when 𝛿 ~ 0 and MSE when 𝛿 ~ ∞ (large numbers.)

Model performance

We can conclude with 80 % accuracy that our data predictions are no more than 30 % percent off. This might seem a lot, but note that this is our first preliminary model with merely 4400 data points. We expect, that in three months’ time, when data entries should exceed 5000, our results will have been much better.

Conclusion

AI continues to become the to-go tool for coming up with neat solutions to everyday problems. In our case, we came up with an efficient machine learning model that could to some extent predict the rental prices of apartments making the student experience all the more enjoyable.

What started out as a hobby project, turned out to be quite a successful TensorFlow model for predicting Slovenia’s capital’s rental prices.

Connect and read more

Feel free to contact me via any social media. I’d be happy to get your feedback, acknowledgment, or criticism. Let me know what you create !!!

LinkedIn, Medium, GitHub, Gmail