2026 Is The Year Privacy Gets Real (And Really Technical)

Privacy has been important for a decade. In 2026, it turns into homework you actually have to finish. Between a wave of new state privacy laws, enforcement agencies finally checking what your code does (not just what your policy says), and universal opt‑out signals becoming mandatory in more places, the vibes have shifted from “we’ll fix it later” to “ship it compliant or don’t ship it at all.”

If you work in tech, marketing, or product, this is the year privacy stops being a side quest and becomes part of the main storyline. Multiple states are flipping the switch on new laws, regulators are coordinating across borders, and users are slowly figuring out they can broadcast “do not track me” from their browser with things like Global Privacy Control and let your site deal with it.

The US Privacy Map Just Got Crowded

In 2018, California’s CCPA was basically the only game in town. Fast‑forward to 2026, and a broad majority of Americans now live in a state with a comprehensive privacy law on the books. A recent breakdown from MultiState notes that with new laws taking effect in Indiana, Kentucky, and Rhode Island on January 1, 2026, there are now twenty states with full‑blown privacy regimes in force, with more queued up behind them through 2027.

These new laws all hit the greatest hits. Notice, access, deletion, correction, opt‑out of sales and targeted ads, but the details differ enough to make a simple “copy California” strategy dangerous. Analyses from firms like Baker Donelson and Axiom point out that while Kentucky, Indiana, and Rhode Island largely mirror the Virginia model, they diverge on applicability thresholds, the definition of “sale,” and which data categories count as sensitive, which is exactly how you end up with five slightly different versions of your consent banner and everyone annoyed. The pattern is obvious though. More transparency, more user control, and far less tolerance for silent data hoarding.

Connecticut, Colorado, And The “Privacy For Real” States

Some states are not just adding privacy laws, they’re sharpening them. Connecticut’s 2026 amendments are a good example of how the screws are tightening quietly but aggressively. As summarized in MultiState’s 2026 update, Connecticut is dropping its threshold from 100,000 to 35,000 consumers and pulling in any company processing sensitive data, even if they don’t hit the usual volume numbers. Translation: a lot of smaller and mid‑sized companies that thought they were under the radar are suddenly very much on it.

Colorado is making similar moves, especially around what counts as “sensitive.” A 2026 overview by Axiom highlights how the Colorado Privacy Act now explicitly treats precise geolocation and certain biometric and neural data as sensitive, which triggers stricter consent and handling requirements. When a law starts name‑dropping “neural data,” you know regulators are trying to future‑proof against brain‑computer interfaces, advanced wearables, and whatever else the hardware people dream up next. The message is clear: 2026 privacy isn’t just about browser cookies anymore; it’s about anything that can be used to map you, track you, or model your behavior.

Universal Opt‑Out Signals Go From Niche To Mandatory

Remember Global Privacy Control (GPC), the browser‑level “please stop stalking me” signal that looked like a nice‑to‑have a few years ago? In 2026, it’s one of the most practical levers ordinary users have, and regulators are backing it with teeth. A detailed guide from Didomi notes that as of January 1, 2026, twelve US states require businesses that sell or share personal data or engage in targeted advertising to honor universal opt‑out mechanisms or opt‑out preference signals, including California, Colorado, Connecticut, Oregon, Texas, Minnesota, Maryland, and others. In those states, your site has to treat that signal as if the user manually opted out on your own settings page.

This isn’t just a theoretical requirement anymore. Didomi’s enforcement recap points back to the Sephora CCPA case and then to a coordinated sweep in September 2025 where the California Privacy Protection Agency and the attorneys general of California, Colorado, and Connecticut specifically targeted companies that ignored GPC. On top of that, new California privacy regulations effective January 1, 2026, require businesses to visibly indicate when an opt‑out preference signal has been processed. Think explicit “Opt‑Out Request Honored” messaging when a GPC user lands on your site. Privacy UX can’t just be pretty anymore, it has to show its work.

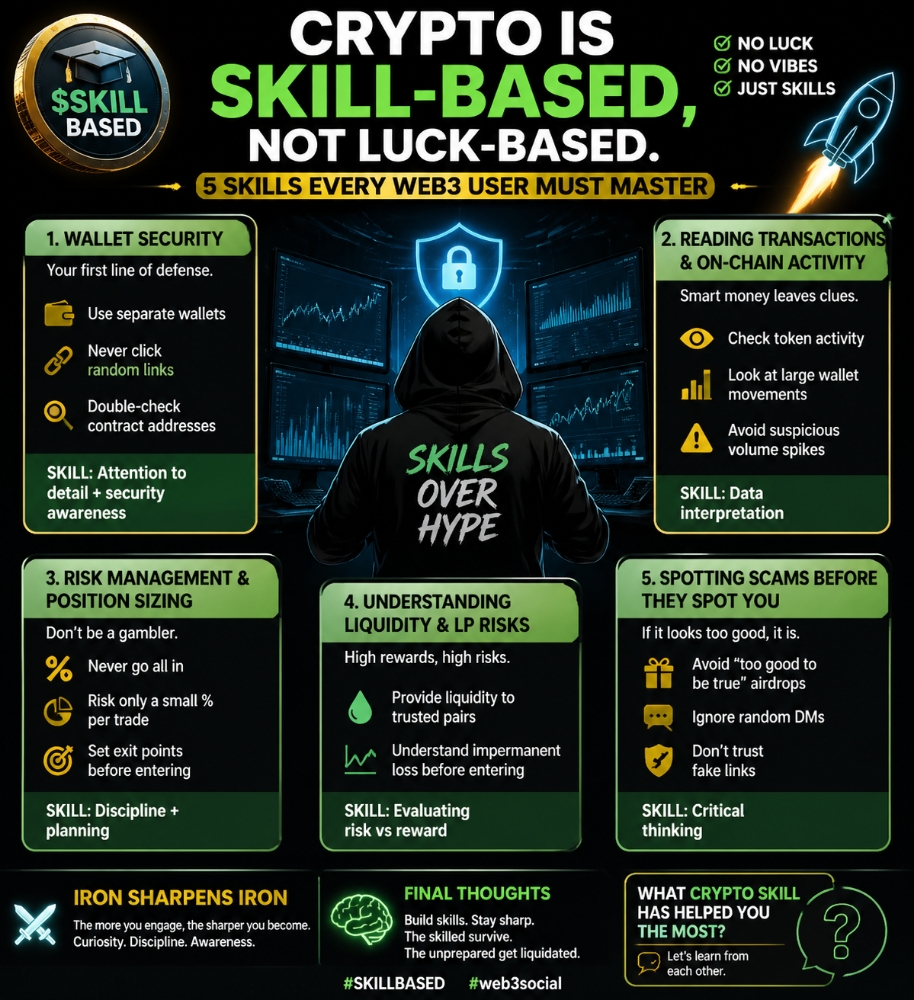

Regulators Stop Reading Policies And Start Reading Code

The old privacy playbook was simple. Lawyer writes policy, designer adds banner, engineer toggles a consent flag somewhere, everyone goes to lunch. Regulators have started to call that bluff. A 2026 global privacy compliance guide points out that enforcement agencies are moving past checkbox audits and into what actually happens in your flows: can users find the controls, do opt‑outs really cut off third‑party trackers, and does your back‑end match your front‑end promises. If your banner says “we don’t sell your data” while your adtech stack is aggressively doing the opposite, that’s not just bad optics anymore, that’s an enforcement risk.

You see the same shift in how impact assessments are being used. California’s new regulations require mandatory risk assessments for processing activities that present significant risk to consumer privacy, with initial assessments due by April 1, 2028, and specific obligations around automated decision‑making and profiling kicking in by 2027. Guidance from firms tracking 2026 developments recommends treating these Data Protection Impact Assessments less like paperwork and more like design constraints for data flows, retention, and access patterns. The net effect is that privacy conversations are moving out of the policy doc and into architecture diagrams, tracking plans, and code reviews.

Automated Decision-Making, AI, And The Human In The Loop

All of this is colliding with the rise of AI and automated decision‑making. The EU has been wrestling with this for a while, and a 2024 decision of the Court of Justice of the European Union in a case involving credit scoring clarified just how strict the GDPR can be on “solely automated” decisions that significantly affect individuals. Under GDPR Article 22, people have the right not to be subject to such decisions and to demand human review, which changes the vibe considerably if an algorithm just nuked your loan application.

All of this is colliding with the rise of AI and automated decision‑making. The EU has been wrestling with this for a while, and a 2024 decision of the Court of Justice of the European Union in a case involving credit scoring clarified just how strict the GDPR can be on “solely automated” decisions that significantly affect individuals. Under GDPR Article 22, people have the right not to be subject to such decisions and to demand human review, which changes the vibe considerably if an algorithm just nuked your loan application.

US state laws are more timid here so far. As privacy attorneys analyzing automated decision‑making have pointed out, most US frameworks give consumers some rights to opt out of certain profiling or targeted advertising, but they stop short of the strong “talk to a human and argue your case” rights you see in Europe. Meanwhile, the EU AI Act is rolling out a separate, risk‑based regime for AI, with full enforcement ramping up around 2026, requiring human oversight, documentation, and stricter controls for “high‑risk” AI systems in credit, employment, and law enforcement. If you’re building or buying AI models that affect people’s livelihoods, you’re no longer just shipping software; you’re signing up for a regulatory relationship.

2026 Privacy Strategy

So where does that leave actual teams trying to ship things without getting buried in legal memos? The emerging pattern is pretty clear. Legal teams track the multi‑state maze using primers like “New State Privacy Laws Expand Consumer Data Control in 2026” and global updates, but engineering and product own whether those rights are actually usable in the product. That means unified consent and preference systems, centralized data subject rights workflows, and privacy requirements baked into the roadmap instead of taped onto the sprint after launch.

For users, 2026 might be the first time privacy controls feel like they actually do something. With GPC recognized in more states, enforcement actions piling up against misconfigured banners in cases highlighted by Didomi’s GPC enforcement roundup, and regulators forcing clearer UX around opt‑outs, the default experience should slowly tilt away from surveillance‑by‑default. For builders, the takeaway is simple. We’re past the era of pretty privacy pages and into the era of privacy‑literate code, clear UX, and real governance. If your product story in 2026 doesn’t include a real privacy story, you’re basically shipping half the feature.

Thanks for reading. If you’re into privacy, security, and the tools that actually make this stuff usable in real life, you’ll probably like what I’m building over on my website.

Stay curious, keep breaking things (responsibly), and keep learning.